Overview

This project is a multisensory interactive system designed to encourage social interaction between people who do not share a common language. It focuses on reducing social and communication barriers by using physical activity and non-verbal interaction instead of traditional screen-based solutions.

The system creates a shared experience where users collaborate through movement, visual cues, and simple game mechanics, making interaction more natural and accessible.

How it works

The system is built around a distributed setup consisting of a central display and multiple interactive controllers placed in the environment.

Users interact with the system by moving between stations and selecting answers together. The central screen displays questions, while each controller represents a possible answer. To respond, players must coordinate and press inputs simultaneously, encouraging communication and teamwork.

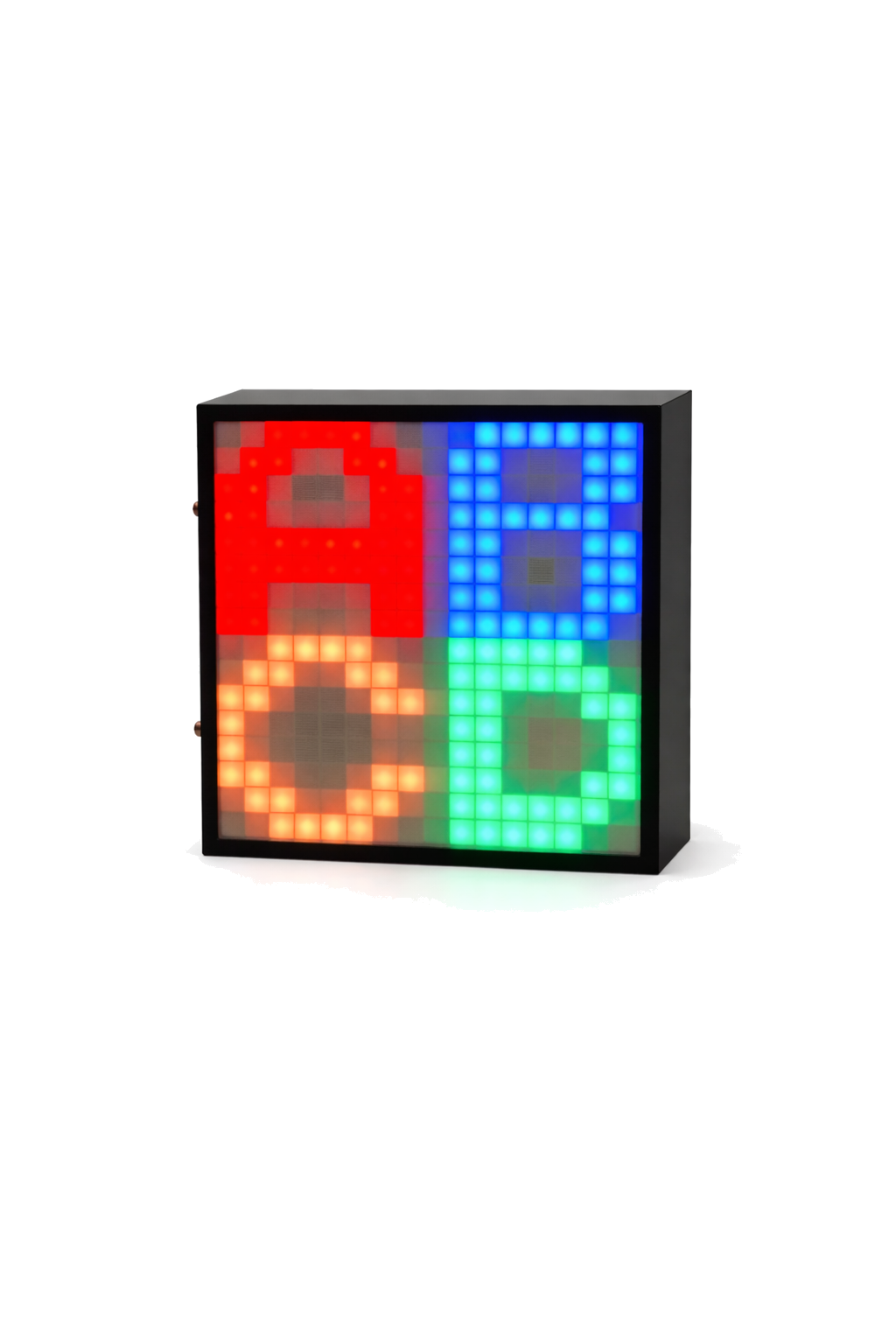

Each controller is powered by a microcontroller and equipped with a LED matrix and physical buttons. The LED matrix provides visual feedback such as symbols, colors, and animations, allowing interaction without relying on language.

The devices communicate through wireless communication (MQTT over Wi-Fi), enabling real-time synchronization between all components. The system processes inputs and updates the game state instantly, providing immediate feedback to users.

The interaction is designed to be low-barrier and intuitive, relying on visual information, movement, and shared decision-making. By combining physical interaction with distributed system logic, the experience naturally promotes collaboration and engagement.